In the modern digital era, communication isn’t just about cables and signals; it’s about agreements and standardized architectures. Whether you are a telecom professional or a student learning how to network, two terms define how our global internet functions: the Reference Interconnect Offer (RIO) and the OSI Reference Model (OSI/RM).

As we move towards a future of autonomous AI agents, the way these smart devices communicate over the network depends heavily on a standardized OSI model to ensure seamless data flow.

While RIO defines the “Rules of Engagement” between two service providers, the OSI layers define the “Rules of Communication” between two machines. This guide explores both in exhaustive detail.

Part 1: Understanding Reference Interconnect Offer (RIO)

Before diving into the 7 layers of OSI model, we must understand how to define interconnection in a business context.

What is a Reference Interconnect Offer?

A Reference Interconnect Offer (RIO) is a public document issued by a dominant network operator. It outlines the terms, conditions, and technical specifications under which it will allow other operators to connect to its network.

Interconnection define: It is the physical and logical linking of telecommunications networks used by the same or a different organization to allow users of one organization to communicate with users of another.

Without a standardized RIO, open systems would struggle to coexist. It ensures that network architecture remains transparent, preventing monopolies from blocking smaller competitors.

Part 2: The OSI Reference Model – The Blueprint of Communication

To understand how an interconnection actually works on a bit-by-bit level, we use the Open Systems Interconnection model, commonly known as the OSI Model.

Developed by the ISO, the OSI reference model is a conceptual framework that standardizes the functions of a telecommunication or computing system into seven distinct categories or networking layers.

Why do we call it “Open Systems”?

An open system definition refers to a computer system that provides a combination of interoperability, portability, and open software standards. The open system interface allows hardware from different vendors (like Cisco, Juniper, or Huawei) to talk to each other seamlessly.

Part 3: Deep Dive into the Seven Layers of OSI Model

The OSI stack is organized from the highest level (User-facing) to the lowest level (Hardware-facing). Let’s break down the layers of OSI model one by one.

1. The Application Layer (Layer 7)

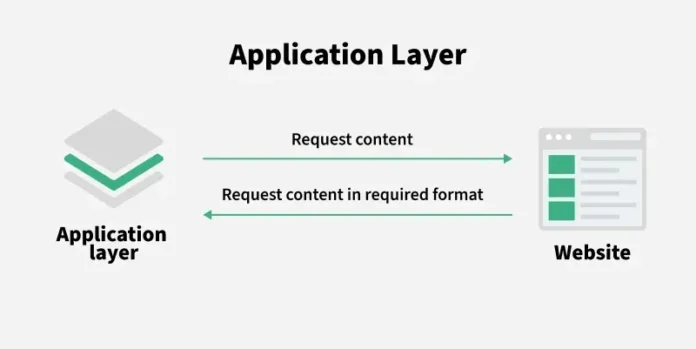

The application layer is where the user interacts with the network. When you use a browser or an email client, you are at Layer 7.

- Protocol Data Unit (PDU): Data

- Key Concept: This layer uses an application layer gateway to provide security and protocol translation. It is the window through which open systems access network services.

2. The Presentation Layer (Layer 6)

Often called the “Syntax Layer,” this part of the OSI stack model ensures that data is in a usable format. It handles encryption, compression, and translation.

- PDU: Data

- In RIO context: This ensures that the information communication model used by Operator A is readable by the systems of Operator B.

3. The Session Layer (Layer 5)

The session layer manages the “dialogue” between computers. It starts, stops, and restarts sessions.

- PDU: Data

- Key Function: It acts as the communication models coordinator, ensuring that if a connection drops, it can resume from a checkpoint.

4. The Transport Layer (Layer 4)

This is where the concept of “End-to-End” communication lives. It handles error recovery and flow control.

- PDU: Segments

- Technical Highlight: This layer uses a window framing diagram logic to manage how much data is sent before an acknowledgment is required, preventing the receiver from being overwhelmed.

5. The Network Layer (Layer 3)

The network layer is the heart of routing. It decides the physical path the data will take.

- PDU: Packets

- Keywords: Network layers, IP Addressing, Routers.

- Connection to RIO: Most Reference Interconnect Offers focus heavily on Layer 3, as this is where “Peering” and “Transit” between different OSI systems occur.

6. The Data Link Layer (Layer 2)

This layer provides node-to-node data transfer. It corrects errors that may occur at the Physical Layer.

- PDU: Frames

- Hardware: The NIC OSI (Network Interface Card) operates here. It breaks the data into frames and handles MAC addressing.

- Sub-layers: Logical Link Control (LLC) and Media Access Control (MAC).

7. The Physical Layer (Layer 1)

The bottom-most of the osi stack layers. It deals with the actual physical connection—cables, switches, and radio waves.

- PDU: Bits

- Focus: It defines the layers network osi electrical and physical specifications.

Part 4: Technical Components of the OSI R

When writing about layers in CN (Computer Networks) or CN 7, we must look at the specific units and interfaces that make the OSI layer model function.

Protocol Data Unit (PDU)

A Protocol Data Unit is a single unit of information transmitted among peer entities of a computer network. As data moves down the OSI reference model 7 layers, it gets encapsulated:

- Data (Layers 7, 6, 5)

- Segment (Layer 4)

- Packet (Layer 3)

- Frame (Layer 2)

- Bit (Layer 1)

The Role of NIC in OSI

The NIC (Network Interface Card) is the bridge between your computer and the network. In the OSI rm, it primarily functions at the Data Link Layer but has Physical Layer components (the port where the cable plugs in).

Part 5: How RIO and OSI Model Work Together

You might ask, “How does a legal Reference Interconnect Offer relate to networking layers?”

- Physical Interconnection: The RIO specifies the physical locations (Points of Interconnect) where cables meet. This is OSI Layer 1.

- Data Link Protocols: The RIO defines if they will use Ethernet, Fiber, or Frame Relay. This is OSI Layer 2.

- Traffic Routing: The RIO outlines how IP packets are exchanged between the two open system interface points. This is OSI Layer 3.

- Security Gateways: If one operator requires an application layer gateway to filter traffic for security, this is negotiated in the RIO terms and implemented at OSI Layer 7.

Part 6: Why the Seven Layers of Open System Interconnection Still Matter

Even though the modern internet mostly uses the TCP/IP suite, the layers of OSI reference model remain the gold standard for troubleshooting and education.

- Standardization: It allows different osi systems to work together.

- Troubleshooting: By using the layer layer osi approach, technicians can isolate problems. If the cable is unplugged, they don’t waste time checking the application layer.

- Education: Understanding the seven layers of osi model is the first step for anyone learning how to network.

Summary of the OSI Model Layers

| Layer # | Layer Name | PDU | Function |

| 7 | Application | Data | Network Services to Applications |

| 6 | Presentation | Data | Data Representation & Encryption |

| 5 | Session | Data | Interhost Communication |

| 4 | Transport | Segments | End-to-End Connections & Reliability |

| 3 | Network | Packets | Path Determination & IP (Routing) |

| 2 | Data Link | Frames | Physical Addressing (MAC & LLC) |

| 1 | Physical | Bits | Binary Transmission & Cables |

Conclusion: The Synergy of Policy and Technology

A Reference Interconnect Offer is more than just a contract; it is a technical blueprint that relies on the OSI model layers to function. By following the OSI reference model, we ensure that open systems remain truly open, allowing for a global, interconnected network architecture.

Whether you are configuring a NIC osi setting, analyzing a window framing diagram, or negotiating a multi-million dollar interconnection define agreement, you are working within the framework of the seven layers of open system interconnection.

Key Takeaways:

- RIO is the commercial agreement for network sharing.

- OSI/RM is the 7-layered technical standard for data flow.

- Encapsulation occurs as data moves through the osi stack model.

- Open system interface ensures vendor neutrality in networking layers.